Web of Minds: A Series Exploring the Future of AI Orchestration

Part 1: How Workflow Orchestration Is Quietly Revolutionizing Enterprise AI

What today’s multi-agent systems reveal about tomorrow’s cognitive networks. In a pharmaceutical research lab in late 2024, something remarkable happened — though you wouldn’t have noticed it from the outside. A team of specialized AI agents coordinated across molecular design, property prediction, and synthetic pathway optimization, compressing drug candidate identification timelines from years to months. No fanfare. No press release. Just a quiet revolution in how artificial intelligence systems work together to solve complex problems.

This is workflow orchestration — the first phase of a profound transformation in how AI operates. While headlines focus on ever-larger language models and their impressive parlor tricks, a more fundamental shift is happening in the plumbing. AI systems are learning to collaborate, divide labor, and coordinate complex tasks without human micromanagement at every step.

For senior leaders trying to separate signal from noise in the AI landscape, understanding workflow orchestration isn’t just about keeping up with technology trends. It’s about recognizing the foundation being laid for how your organization will operate in three to five years. Microsoft’s 2025 Work Trend Index, surveying 31,000 workers across 31 countries, reveals that 82 percent of leaders say this is a pivotal year to rethink key aspects of strategy and operations, while 81 percent expect agents to be moderately or extensively integrated into their company’s AI strategy in the next 12 to 18 months. The systems being built today in pharmaceutical R&D, financial services, and supply chain management aren’t just solving today’s problems — they’re establishing the protocols and patterns for tomorrow’s cognitive infrastructure.

The Pharmaceutical Discovery That Reveals the Pattern

Let’s return to that drug discovery breakthrough. The multi-agent framework coordinated specialized computational agents to work synergistically across molecular design, property prediction, synthetic pathway optimization, and multi-criteria decision-making. But here’s what makes this significant: the system didn’t just speed up existing processes. It revealed something about how AI orchestration actually works at scale.

The Discovery Agent and Simulation Agent collaborated to reduce the time to identify drug candidates from years to months, saving billions in R&D costs. At the same time, the Trial Design Agent dynamically adapted trial protocols based on interim results. Each agent had a specialized function. Each operated with its own model, optimized for its specific task. But the real innovation was in how they coordinated — passing context, validating outputs, and escalating decisions when human judgment was required.

The pattern that emerged mirrors something we’ve seen before in human organizations: specialization creates efficiency, but only when paired with effective coordination. As of October 2025, AI spending in pharma is expected to reach $3 billion, with 68% of life science professionals using AI in 2024. This isn’t hype — it’s infrastructure investment in a new operational model.

ConcertAI’s system analyzes data from tens of thousands of cancer patients, rapidly spots treatment patterns, and suggests optimal clinical trial designs, demonstrating how multi-agent systems bring transparency through reasoning capabilities. As one industry observer noted, when you add reasoning components to these systems, agents can show their work and explain the logic behind recommendations — critical in fields like medicine where accountability matters.

What Workflow Orchestration Actually Looks Like Today

Press enter or click to view the image in full size

Strip away the buzzwords, and workflow orchestration is deceptively simple in concept: multiple AI agents, each specialized for specific tasks, working together under a coordination framework to accomplish complex objectives that would overwhelm a single system.

In practice, it looks nothing like the science fiction version of AI. There’s no central superintelligence pulling strings. Instead, imagine a well-run project team where each member has deep expertise in their domain, clear responsibilities, and established protocols for handing work to colleagues. Now replace the humans with AI agents, and you have the essence of Phase 1 orchestration.

The architecture breaks down into three essential components:

Specialized agents with focused capabilities. Each agent is optimized for a specific type of task — one might handle natural language queries, another access databases, a third perform calculations, and a fourth synthesize information into reports. This specialization allows each agent to focus on a specific area within the problem domain, with a coordinator agent managing overall planning, a programming agent generating code and test cases, and a code review agent providing constructive feedback.

A supervisor or orchestration layer. This doesn’t think or reason in the human sense — it routes tasks, manages handoffs, tracks state, and ensures outputs from one agent become inputs to the next in the correct sequence. The supervisor breaks down requests, delegates tasks, and consolidates outputs into a final response.

Defined workflows and protocols. Unlike future phases where agents will negotiate and adapt, Phase 1 systems operate within predetermined patterns. If a user asks about their mortgage application, the supervisor knows to route to the mortgage agent. If that agent needs credit history, it knows which database agent to call and what format to expect in return.

This might sound rigid, but it’s this very structure that makes Phase 1 orchestration work reliably enough for enterprise deployment. The multi-agent collaboration approach achieved a 90 percent success rate across travel planning, mortgage financing, and software development domains, while single-agent approaches scored only 60 percent, 80 percent, and 53 percent, respectively.

The Capacity Gap Driving Adoption

The push toward orchestration isn’t driven by technological possibility alone — it’s driven by necessity. Microsoft’s research reveals a capacity gap that traditional solutions cannot address: 53 percent of leaders say productivity must increase, yet 80 percent of the global workforce reports lacking the time or energy to do their work. During core work hours, employees are interrupted every two minutes by meetings, emails, or pings — 275 times per day. Chats outside the 9-to-5 workday are up 15 percent year-over-year, with 58 messages now arriving before or after work hours.

This isn’t sustainable. Intelligence has traditionally been one of business’s most valuable yet limited assets, bound by human time, energy, and cost. With workflow orchestration, that’s changing. Intelligence is becoming what economists call a durable good: abundant, affordable, and available on demand. Already, 82 percent of leaders say they’re confident they’ll use digital labor to expand workforce capacity in the next 12 to 18 months.

Real-World Implementations Across Industries

While pharmaceutical research makes for compelling examples, workflow orchestration is quietly transforming operations across sectors:

Financial Services: A Gartner survey of 121 finance leaders found that 58% of finance functions employed AI agents in 2024, up 21 percentage points from 2023. Multi-agent systems for fraud detection analyze transaction patterns, cross-reference databases, and flag anomalies in real time. In Fiscal Year 2024, machine learning AI helped prevent four billion dollars in fraud, demonstrating a measurable impact.

The architecture typically involves specialized agents for different fraud patterns — one looking for transaction-velocity anomalies, another for geographic impossibilities, a third for behavioral deviations — coordinated by a supervisor who weighs evidence and determines risk scores. Multi-agent systems demonstrate particular promise in complex domains such as algorithmic trading and fraud detection, with specialized agent frameworks achieving 50-80% productivity gains over traditional approaches on financial data tasks.

Notably, Microsoft’s Work Trend Index identifies customer service, marketing, and finance as the top areas where leaders expect accelerated AI investment in the next 12 to 18 months. Among Frontier Firms, leaders are far more likely than average workers to use AI for tasks related to data science (72 percent versus 54 percent globally) and financial analysis — areas where orchestrated agent systems deliver immediate value.

Customer Service Operations: Organizations are deploying agent teams where one handles natural language understanding, another accesses customer records, a third checks inventory or order status, and a fourth formulates responses. Northwestern Mutual transformed its internal developer support using multi-agent orchestration, reducing response times from hours to minutes and freeing support engineers to focus on complex issues.

Supply Chain Management: Multiple agents monitor inventory levels, track shipments, predict demand, and optimize routing — each operating on different data sources and timescales, but coordinating through a central orchestration framework to surface insights and recommendations.

These implementations share common characteristics: they handle bounded domains with clear success metrics, operate with human oversight, and rely on predetermined coordination patterns rather than emergent collaboration.

The Technology Stack Enabling Orchestration

For leaders evaluating orchestration strategies, understanding the technology landscape matters less than understanding the strategic choices these technologies represent. Three major platform approaches have emerged, each reflecting different philosophies about control, flexibility, and operational overhead.

Cloud Platform Approaches: Amazon Bedrock

Amazon Bedrock’s multi-agent collaboration capability enables developers to build, deploy, and manage multiple specialized agents working together seamlessly to tackle intricate, multi-step workflows. The platform emphasizes rapid deployment with managed infrastructure — you define agents, specify their roles and capabilities, and the platform handles orchestration, session management, and scaling.

The multi-agent collaboration framework starts with a hierarchical approach comprising key components designed to optimize performance and efficiency. Supervisor agents coordinate specialist sub-agents, breaking down complex queries into manageable subtasks.

The platform’s strength lies in enterprise-grade reliability and minimal operational overhead. Organizations can deploy sophisticated multi-agent systems without building custom orchestration infrastructure. The tradeoff? Less flexibility in orchestration logic compared to building from scratch with open-source frameworks.

Microsoft’s Copilot Studio

Press enter or click to view the image in full size

Microsoft announced multi-agent orchestration capabilities at Build 2025, enabling agents to exchange data, collaborate on tasks, and divide their work based on each agent’s expertise. Already, more than 230,000 organizations use Copilot Studio to create and customize agents, with projections estimating 1.3 billion AI agents across businesses by 2028.

Multi-agent orchestration in Copilot Studio allows agents built with Microsoft 365, Microsoft Azure AI, and Microsoft Fabric to collaborate by delegating tasks and sharing results to complete complex workflows. The platform also supports the open Agent2Agent protocol, allowing connections to agents built on third-party platforms.

Copilot Studio represents Microsoft’s bet on low-code development and deep integration with the Microsoft 365 ecosystem. Organizations already invested in Microsoft tools find natural synergies, particularly for agents that need to interact with Word, Excel, SharePoint, and Teams.

Open Source Frameworks: LangChain, LangGraph, CrewAI

Press enter or click to view image in full size

The open-source ecosystem offers maximum flexibility at the cost of more hands-on development and operation. LangChain popularized chains, agents, and memory integrations as the modular orchestrator. At the same time, AutoGen provides a multi-agent conversation-first framework built for collaboration, and CrewAI delivers a role-based task execution engine designed for team-oriented agents.

LangGraph allows developers to define agent workflows as stateful graphs, steering the framework towards multi-agent systems, iterative refinement loops, and deterministic task orchestration. Each node in the graph represents an operation or agent action, with edges defining control flow and data movement.

CrewAI gained traction through its lower learning curve and extensive documentation, enabling developers to engineer teams of intelligent agents that work in tandem. The framework structures interaction through role assignment and goal specification, with built-in functionality for task delegation, sequencing, and state management.

For organizations with strong technical capabilities and specific requirements that platform solutions do not meet, these frameworks provide the building blocks. But they demand expertise in prompt engineering, state management, error handling, and distributed systems — capabilities not every organization possesses.

The Hidden Complexity No One Talks About

Here’s what the vendor demos don’t show you: connecting multiple AI agents is vastly more complicated than stitching together APIs. The challenges that emerge reveal why workflow orchestration remains in Phase 1, and why the leap to Phase 2 self-coordination represents a genuine technical frontier.

The Context Window Problem

Each agent operates within constraints — most importantly, the context window, which limits how much information it can process at once. When Agent A completes a task and hands it off to Agent B, how much context transfers? Pass too little, and Agent B lacks the necessary information. Pass too much, and you exceed context limits or introduce noise that degrades performance.

Current orchestration frameworks handle this through careful prompt engineering and selective context passing. The supervisor decides what information each agent needs—a brittle, manual process that requires constant tuning. When presented with many tools, a single agent tended to hallucinate tool calls and failed to reject some out-of-scope requests. At the same time, multi-agent approaches with distributed problem-solving proved more resilient.

Error Propagation and Recovery

In traditional software, errors cascade predictably. In multi-agent systems, errors compound unpredictably. Agent A misunderstands a query. Agent B receives malformed input and generates garbage output. Agent C attempts to synthesize the garbage, hallucinates to fill gaps, and presents a confident but completely wrong answer to the user.

Debugging these cascades requires sophisticated tracing capabilities — understanding exactly what each agent received, how it interpreted inputs, what decisions it made, and what outputs it generated. Amazon Bedrock integrated debugging capabilities via a trace and debug console, enabling developers to monitor and analyze inter-agent communication. Without such visibility, troubleshooting becomes nearly impossible.

The Cost Multiplication Factor

Each agent interaction typically involves API calls to large language models. A simple user query might trigger five agents, each making three model calls, at 15 cents per call. That’s more than $2 per query — sustainable for high-value use cases like drug discovery, but prohibitive for customer service chatbots handling thousands of daily queries.

Organizations deploying orchestration must balance capability against cost. Sometimes this means using smaller, cheaper models for routine tasks while reserving powerful models for complex reasoning. Sometimes it means aggressive caching to avoid redundant calls. It always means careful monitoring of usage patterns and costs.

The Human-in-the-Loop Requirement

Phase 1 orchestration succeeds precisely because it maintains human oversight at critical junctures. Using federated learning with differential privacy techniques enables sharing sensitive patient data across agents while employing automated MLOps pipelines for continuous model retraining, but humans still validate high-stakes decisions.

This requirement constrains deployment scenarios. An agent team analyzing financial data can operate overnight and present findings in the morning. An agent team making real-time trading decisions requires a different architecture — either very high confidence thresholds or humans constantly monitoring and ready to intervene.

Who’s Getting This Right and What They’re Learning

Beyond the headline deployments, patterns are emerging from successful implementations. Organizations solving these challenges are discovering principles that will shape Phase 2 development.

Pattern 1: Start Smaller Than You Think

The most successful deployments begin with three agents or fewer, handling well-defined workflows with clear success criteria. Northwestern Mutual deployed a multi-agent orchestration framework for internal developer support, achieving measurable impact by reducing response times from hours to minutes. They started narrow, proved value, then expanded gradually.

This pattern is supported by Microsoft’s research on Frontier Firms — organizations that have deployed AI organization-wide, with high scores in AI maturity, active agent use, and plans for extensive integration. Among these early adopters, 71 percent of leaders say their companies are thriving, compared with just 39 percent of workers globally. These organizations understand something crucial: you prove value before scaling.

Contrast this with failed proofs-of-concept that attempt to orchestrate eight agents handling ambiguous business processes from day one. They inevitably discover that every additional agent exponentially increases complexity. By the time they’ve debugged handoffs between Agents 1–4, Agents 5–8 have introduced new failure modes.

Pattern 2: Obsess Over Agent Specialization

Organizations must clearly designate the role and responsibilities of the supervisor agent and every collaborator agent on the team and minimize overlapping responsibilities. When agents have overlapping capabilities, the supervisor struggles to route correctly. When agents have coverage gaps, tasks fall through the cracks between them.

The best implementations define agents around natural information boundaries — one agent per data source, one agent per distinct analytical task, one agent per output format. Each agent becomes an expert in its narrow domain, and handoffs occur at natural workflow seams.

Pattern 3: Invest in Observability from Day One

You cannot improve what you cannot measure, and you cannot debug what you cannot observe. Agent Evaluation in Microsoft Copilot Studio enables structured, automated testing directly on the platform, allowing makers to create evaluation sets, select test methods, define success measures, and run tests.

Successful organizations build comprehensive logging of agent interactions, decision points, and performance metrics before deploying to production. They create dashboards showing which agents handle which query types, where handoffs succeed or fail, and how costs accumulate. This telemetry becomes the foundation for optimization.

Pattern 4: Plan for Governance Before Scale

As agent systems proliferate, governance becomes critical. Microsoft Purview Information Protection extends to Copilot Studio agents that leverage Microsoft Dataverse, enabling organizations to automatically classify and protect sensitive information at scale and ensure AI-driven solutions align with enterprise compliance and governance requirements.

Leading organizations establish agent registries cataloging all deployed agents, their capabilities, data access permissions, and approval workflows. They implement controls ensuring agents can only access data they’re authorized to use. They establish review processes for agents making consequential decisions.

Without such governance, organizations wake up to discover 200 agents scattered across departments, some accessing sensitive data without proper controls, others duplicating functionality, and many abandoned but still consuming resources.

Pattern 5: Define Your Human-Agent Ratio

Press enter or click to view the image in full size

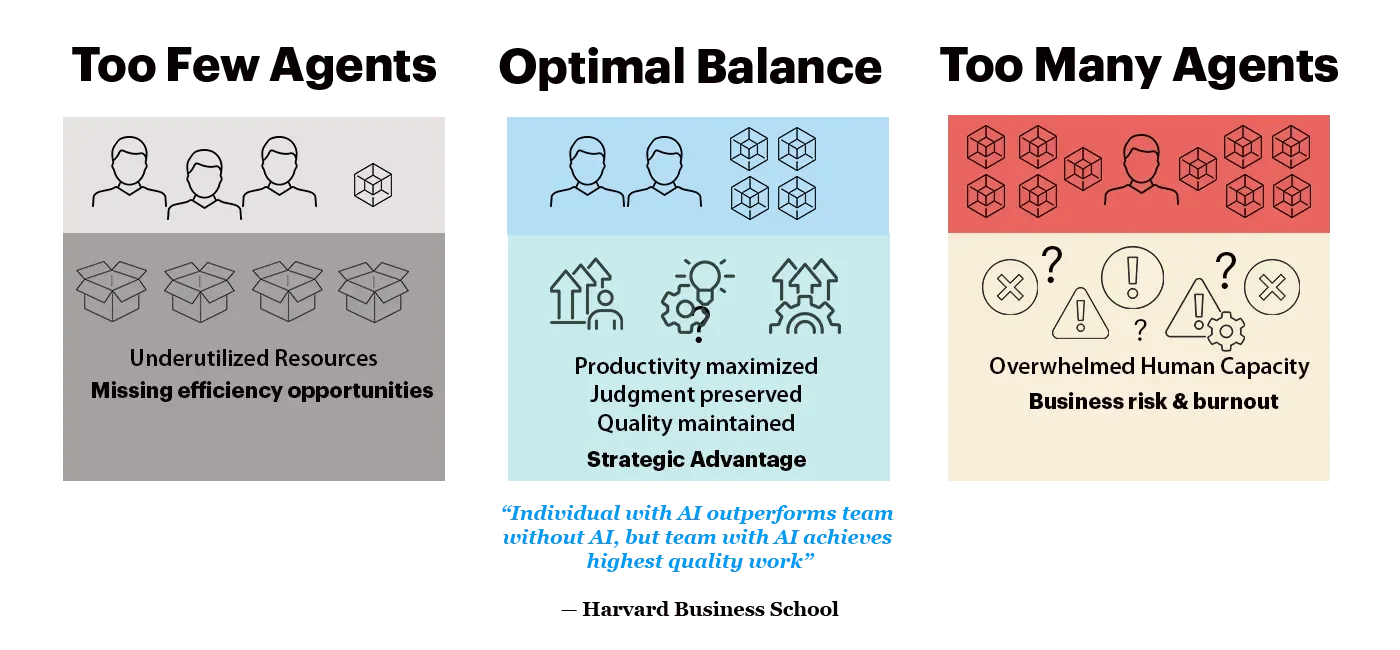

A new strategic consideration is emerging from early deployments: the human-agent ratio. This metric, highlighted in Microsoft’s Work Trend Index, asks fundamental questions: How many agents are needed for each role and task? How many humans are required to guide them?

Harvard research studying nearly 800 employees found that an individual with AI outperforms a team without it, but when it comes to the highest-quality work, a team with AI outperforms them all. The optimal balance depends on the specific function and task. Some processes lend themselves to near-complete automation with minimal human oversight. Others — particularly those requiring judgment, empathy, or creative thinking — demand human leadership with AI augmentation.

The ratio varies by function. Nearly half of leaders (46 percent) say their companies are using agents to fully automate workflows, particularly in customer service, marketing, and product development. Yet economist Daniel Susskind identifies three persistent needs for human involvement: efficiency gains from human-AI collaboration on complex tasks, customer preference for human interaction on high-stakes decisions, and moral accountability requiring human responsibility for consequential outcomes.

Getting this balance right is critical. Too few agents per person underutilize both agentic and human resources, leaving potential efficiencies on the table. Too many agents per person overwhelms human capacity for applying judgment and decision-making, introducing business risk and potential employee burnout. Organizations need new functions — perhaps “Intelligence Resources” departments — to manage the optimal allocation of human and digital labor, much as HR and IT evolved into core organizational functions.

The Path Forward: Implementing Your First Orchestration System

For leaders convinced that workflow orchestration deserves attention, the question becomes tactical: where to start? The answer depends less on technology choices than on organizational readiness and use case selection.

Identifying the Right First Use Case

The ideal starter project shares these characteristics:

Bounded scope with clear success metrics. Can you define in one sentence what the system should accomplish? Can you measure whether it succeeded?

High-frequency, medium-complexity tasks. The sweet spot is work that happens often enough to justify the investment but is complex enough that simple automation doesn’t suffice. Customer support scenarios with specialized AI agents operating as independent microservices, each handling specific business domains through custom coordination logic, represent this balance well.

Tolerance for imperfection during pilot. Early orchestration systems make mistakes. Choose use cases where errors are visible and recoverable, not catastrophic. Internal tools for employees make better pilots than customer-facing applications.

Access to domain experts. You’ll need people who deeply understand the workflow to validate agent behavior, tune prompts, and refine handoffs. Without domain expertise, you’re flying blind.

Building the Minimum Viable Orchestration

Start with three specialized agents and one supervisor:

One agent handles user input interpretation

One agent retrieves or analyzes information

One agent formats and presents results

The supervisor routes queries and coordinates handoffs

The supervisor receives initial queries and breaks them down into sequential tasks, assigning each to the appropriate specialized agent. This four-agent architecture establishes the orchestration pattern without overwhelming complexity.

Focus early development on:

Crystal-clear agent responsibilities: Each agent should have one job it does well

Robust handoff protocols: Define exactly what information passes between agents

Comprehensive logging: Capture every interaction for debugging and optimization

Human validation checkpoints: Insert review steps for consequential decisions

Technology and Team Considerations

Platform selection matters less than team capabilities and organizational context. Organizations with 5,000 or more Microsoft 365 Copilot licenses, or those working with Microsoft account teams, can access Copilot Tuning to train models using company data in a simple, low-code way. For Microsoft-centric organizations with limited AI engineering resources, Copilot Studio offers the fastest path to deployment.

Organizations with strong engineering teams, specific requirements, or a preference for control might choose open-source frameworks. LangGraph leverages the broader LangChain ecosystem, with each graph node able to utilize LangChain tools, memory, or chains, providing flexibility at the cost of more hands-on development.

For AWS-invested organizations, Amazon Bedrock Agents can be combined with open source orchestration frameworks LangGraph and CrewAI for dispatching and reasoning, blending managed services with custom orchestration logic.

The workforce implications are significant. Microsoft’s research shows that 78 percent of leaders are considering hiring for AI-specific roles to prepare for the future, jumping to 95 percent for Frontier Firms. Top roles under consideration include AI trainers, data specialists, security specialists, AI agent specialists, and ROI analysts. Yet 47 percent of leaders list upskilling existing employees as their top workforce strategy for the next 12 to 18 months — second only to expanding team capacity with digital labor at 45 percent.

Budget three to six months for a first pilot with a small, focused team:

One AI/ML engineer for prompt engineering and model integration

One backend engineer for system integration and API development

One domain expert for workflow validation and testing

Part-time product owner for requirements and prioritization

Costs will vary by volume, but expect $5,000-$20,000 in API calls per month during development and early deployment, scaling with usage once in production.

Measuring Success and Learning

Define success metrics before building:

Task completion rate: What percentage of queries does the system fully resolve?

Accuracy: When the system provides answers, how often are they correct?

Speed: How long from query to response compared to the previous process?

Cost per interaction: Total system costs divided by successful resolutions

User satisfaction: For internal tools, survey users regularly

More important than any single metric is the learning you extract. Which agent responsibilities proved hardest to define? Where did handoffs break down most often? What types of queries exceeded the system’s capabilities? These insights inform both optimization of the current system and design of future ones.

The Technical Bottlenecks Preventing Phase 2

Understanding why we remain in Phase 1 illuminates the path ahead. The limitations aren’t arbitrary — they reflect genuine technical challenges that current architectures cannot yet overcome.

Static workflow topology: Today’s orchestration systems operate within predefined patterns. The supervisor knows which agents exist and when to invoke them because developers hardcoded these decisions. Agents cannot discover each other, negotiate new collaboration patterns, or form dynamic coalitions based on task requirements. Moving beyond this requires agents to share common semantic frameworks for describing their capabilities — a standardization problem as much as a technical one.

Context management across extended workflows: As workflows grow longer and more complex, maintaining coherent context becomes increasingly tricky. Future systems will need sophisticated context compression, relevance filtering, and selective memory — capabilities that remain active areas of research.

Trust and verification in open networks: Phase 1 orchestration works within organizational boundaries where trust is assumed. Phase 2, in which agents from different organizations collaborate, requires robust mechanisms to verify agent identity, capabilities, and reliability. This implies cryptographic protocols, reputation systems, and audit frameworks that don’t yet exist in a mature form.

Optimization without human specification: Current systems optimize what humans tell them to optimize. Phase 2 agents will need to identify optimization opportunities, propose new collaboration patterns, and autonomously evaluate trade-offs — requiring goal representation and reasoning capabilities beyond today’s systems.

What’s Coming Next: Early Signals of Phase 2

The transition from static orchestration to dynamic coordination isn’t a binary flip but a gradual accumulation of capabilities. We’re seeing early signals:

Research prototypes demonstrating dynamic coalition formation. Labs are building systems in which agents advertise their capabilities and bid for tasks, forming temporary teams based on current needs rather than predetermined workflows. These remain research demonstrations, but they prove feasibility.

Standardization efforts for agent communication. Copilot Studio will support the open Agent2Agent protocol, allowing agents to connect to those built on third-party platforms. Such standards, if widely adopted, enable the cross-organizational agent collaboration defining Phase 2.

Advances in agent reasoning and planning. Agent flows automate tasks with predictability and consistency and can include AI-driven intelligence to handle the complexity of today’s enterprise scenarios efficiently. At the same time, deep reasoning enables agents to execute complex, multifaceted business processes. As these capabilities mature, agents gain the cognitive tools needed for autonomous coordination.

Watch for these developments in 2025–2026:

Commercial platforms are adding dynamic agent discovery and negotiation features

Industry groups are establishing semantic standards for agent capability description

Regulatory frameworks emerging for cross-organizational AI collaboration

Demonstration projects showing agents from different vendors coordinating without custom integration

Where We Stand

Workflow orchestration represents the foundation of a profound shift in how AI operates — from isolated models responding to individual queries to networks of specialized agents collaborating on complex objectives. We’re further along than headlines suggest; the infrastructure being built in pharmaceutical research, financial services, and customer support is real, deployed, and delivering measurable value.

The data confirms this trajectory. Microsoft’s research shows that 24 percent of leaders say their companies have already deployed AI organization-wide, while just 12 percent remain in pilot mode. The era of experimentation is ending; the era of production deployment has begun. Organizations aren’t waiting for perfect solutions — they’re learning by doing, establishing patterns, and building expertise that will compound into significant advantages.

Yet we’re still very early. Phase 1 systems operate within carefully bounded domains, rely on human oversight, and follow predetermined patterns. They succeed by solving significant but constrained problems rather than by exhibiting genuine autonomy or flexibility.

The limitations we’re hitting — static workflows, challenges with context management, trust and verification gaps — aren’t insurmountable. They’re the next frontier. Organizations investing in orchestration today are learning lessons that will prove invaluable when Phase 2 capabilities emerge. They’re establishing the governance frameworks, operational patterns, and organizational capabilities that tomorrow’s more sophisticated systems will require.

For leaders, the imperative is clear: understand workflow orchestration not as a destination but as a foundation. Microsoft’s research suggests that within the next two to five years, every organization will be on its journey to becoming a Frontier Firm — structured around on-demand intelligence and powered by hybrid teams of humans and agents. The question isn’t whether to engage but how quickly you can start learning. The organizations building these systems today are developing expertise, establishing patterns, and discovering failure modes that will compound into significant advantages as capabilities evolve.

Next in this series: How AI agents will learn to coordinate themselves, negotiating collaboration patterns and forming dynamic coalitions — the breakthrough that transforms orchestration from workflow automation to genuine collective intelligence.